In Canada, data sovereignty is often cited as the rationale for building AI data centres. However, many of the companies building data centres in Canada are American-owned and connected with U.S. surveillance and military operations.

For instance, Alberta-based Beacon Data Centers, which is slated to build a new data centre in Lorneville, New Brunswick is owned by Nadia Partners, an investment firm headquartered in New York. Multiple levels of government have pushed the Beacon project through despite objections from the community over health and safety issues.

In March 2025, the New Brunswick government announced a $905,000 reduction in provincial funding for tourism. A year later, it unveiled its new tourism chatbot Explora, built by American company Matador Network. A government spokesperson said that no Canadian company could offer what Matador Network’s AI model could. A journalistic investigation also revealed the chatbot to be rife with errors.

Currently, the U.S. and China are the dominant players in the AI business. AI infrastructure built in Canada, such as data centres stands to principally be used by foreign AI companies. This means many Canadian regulations on safety, health, privacy, and ethics do not apply to the end users of this infrastructure.

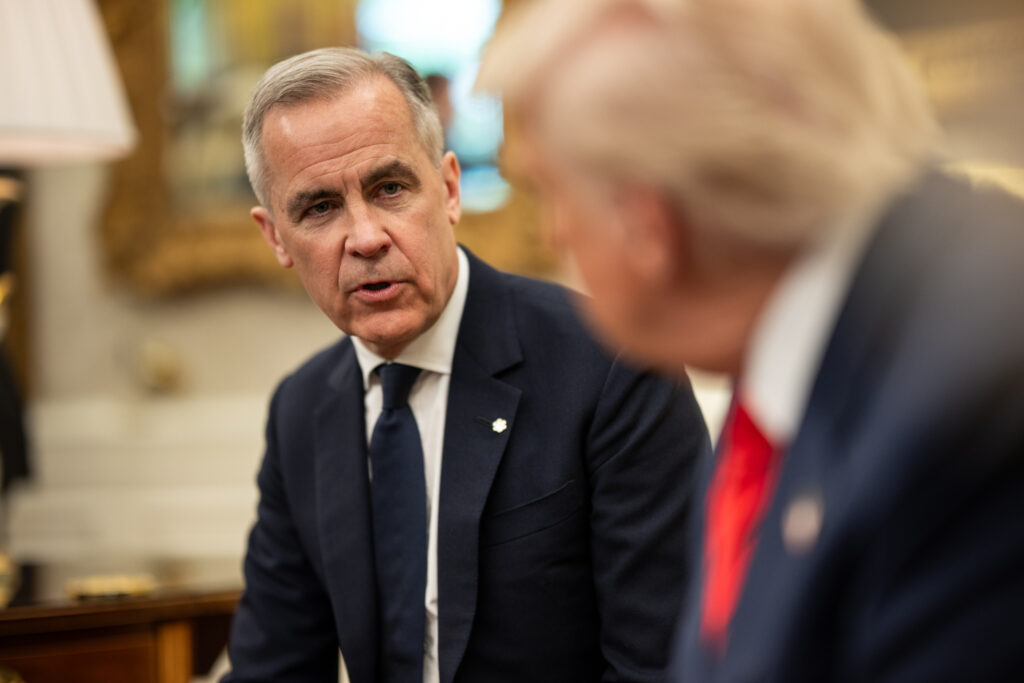

Prime Minister Mark Carney dropped the digital services tax in June 2025 to the benefit of the U.S. tech giants. Carney personally holds nearly US$7 million in Brookfield Asset Management stock options. In November 2025, Brookfield announced an investment of $100 billion in a program to build AI Infrastructure.

Cohere, a Canadian AI startup funded by taxpayers, builds large language models and AI programs for businesses and government. It uses Google infrastructure and is partnered with the American companies Oracle, Nvidia and Salesforce. Another American company, CoreWeave, built Cohere’s data centre in Cambridge, Ontario.

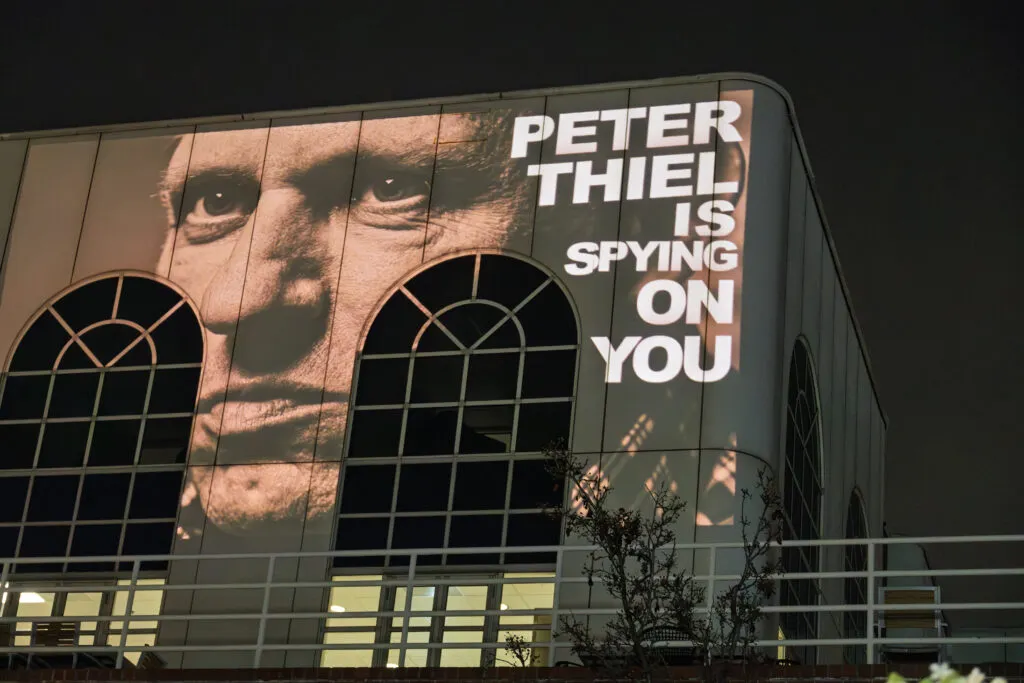

Cohere also has a partnership with Palantir, a U.S. surveillance and AI company whose technologies have been used to further the genocide in Palestine, in the war with Iran and by ICE to target and deport innocent people. Cohere deploys AI models via Palantir’s Foundry platform.

A lawsuit has been brought by 14 publishers against Cohere for using their articles without permission to train AI systems.

Canadian AI specialists and computer scientists Yoshua Bengio and Geoffrey Hinton say there are currently no Canadian companies offering a consumer chatbot. They have called for a coalition of countries to build an ethical version for the public and warn that serious AI regulations need to be developed.

The Artificial Intelligence and Data Act, a proposed law to establish rules for responsible AI development, died in the federal Parliament in January 2025. Labour and human rights groups raised concerns about the proposed law’s lack of protections for workers and vulnerable communities.

Meanwhile, Canada’s Senate Committee on Human Rights is still performing its study of the impacts of artificial intelligence on human rights and economic security. AI’s potential dangers for public health, the environment, and workers nationwide have yet to be fully understood or regulated.

Be part of the conversation!

Only subscribers can comment. Subscribe to The North Star to join the conversation under our articles with our journalists and fellow community members. If you’re already subscribed, log in.